Quality Assurance

Chaos testing: what it is, where it came from, and how to use it

Discover how a Netflix database outage led to the creation of chaos testing and learn how to use this testing approach.

Rustam Sabirov

Chaos testing is a method designed to assess a software system's resilience to unforeseen circumstances or failures.

This software testing approach owes its existence to Netflix. In 2008, following a significant database outage, Netflix's developers opted to migrate their entire infrastructure to AWS (Amazon Web Services). This shift to the cloud was prompted by the need to handle substantially higher streaming loads and the aspiration to move away from a monolithic architecture in favor of microservices. Microservices offer scalability based on user numbers and engineering team size.

During the migration of Netflix's infrastructure, two fundamental principles were established:

- No system should be susceptible to a single point of failure, where one error or outage could result in extensive unplanned downtime.

- Achieving complete certainty about the absence of single points of failure is unattainable. An effective approach involves consistent testing and monitoring to ensure the validity of the first principle.

This led to the development of chaos testing, which entails the proactive identification of system errors to prevent failures and mitigate adverse impacts on users. The objective was to shift from a development model assuming zero failures to one acknowledging their inevitability — compelling developers to view built-in fault tolerance as an obligation rather than an option.

Chaos testing proactively identifies and rectifies weaknesses in a software system. This approach empowers companies to create more reliable systems, ultimately enhancing overall system performance and user experience. Deliberately disrupting production allows for the controlled detection of issues, enabling the development of solutions and the identification of similar issues before real failures occur.

What is chaos engineering?

Chaos engineering, a broader term encompassing chaos testing, entails the proactive injection of controlled and monitored instances of chaos into a system. This approach aims to identify weaknesses and vulnerabilities before they can culminate in a system failure. Chaos testing, a subset of chaos engineering, specifically focuses on the testing aspect within this discipline.

“Chaos engineering is the discipline of experimenting on a system in order to build confidence in the system’s capability to withstand turbulent conditions in production.” Principles of Chaos Engineering

This practice involves controlled experiments that simulate real-world events, including hardware failures, network outages, database issues, and various types of system errors. Software testers assess how the system behaves and responds to these circumstances. Such assessments assist developers in enhancing system fault tolerance, customer satisfaction, and minimizing the impact of unforeseen events.

In simpler terms, chaos engineering deliberately induces failures in a production system to assess how the application copes under intentional stress. This methodology extends the evaluation of application quality beyond traditional testing methods, using unexpected and random failure conditions to identify bottlenecks, vulnerabilities, and weaknesses in the system.

The principles of chaos engineering

While there are no universally recognized or official principles of chaos engineering, various sources and experts articulate them in their own ways. Nonetheless, one can identify more or less general approaches to ensure effective system testing.

Define normal system behavior. Ensure your system is functioning normally and define a stable state, such as when the system is operational, and the error rate is below one percent.

Specify a hypothesis. Formulate a hypothesis regarding a stable state that aligns with the expected experimental result. In other words, the introduced events should not transform the system into anything other than a stable state.

Minimize impact on users. Given that chaos testing involves intentionally disrupting the system, it is crucial to execute it in a manner that minimizes negative impacts on users. Your team should be prepared to respond to incidents if necessary, with clear recovery paths or backup strategies in place.

Introduce chaos. Once you confirm that your system is operational, your team is prepared, and the “blast radius” is confined to pre-agreed areas, you can commence chaos testing on applications. It is advisable to conduct tests in a development environment, allowing you to observe how your service or application responds to events without directly affecting the live version and active users.

Track and repeat. Consistency is key in chaos engineering. Regularly introduce chaos to systematically identify weaknesses in the system. The ultimate goal of chaos engineering is to forge a more reliable system.

Remember, the principles of chaos engineering may vary, but adherence to these general guidelines can help foster a robust and resilient system through effective testing.

Chaos engineers also often draw inspiration from “The fallacies of distributed computing,” formulated by computer scientist Peter Deutsch and other Sun Microsystems employees. These fallacies highlight common misconceptions about distributed systems. They include:

- The network is reliable

- Latency is zero

- Bandwidth is infinite

- The network is secure

- Topology doesn't change

- There is one administrator

- Transport cost is zero

- The network is homogeneous

These fallacies underscore the essence that networks and systems can never achieve 100% reliability or perfection. Recognizing these misconceptions serves as a foundational perspective when planning chaos experiments.

Netflix introduced Chaos Monkeys to simulate real-world scenarios

To assess the resilience of its IT infrastructure, Netflix introduced the innovative Chaos Monkey tool. This tool deliberately disrupts computers within Netflix's production network to observe how other systems respond to such interruptions.

“We discovered that we could align our teams around the notion of infrastructure resilience by isolating the problems created by server neutralization and pushing them to the extreme. We have created Chaos Monkey, a program that randomly chooses a server and disables it during its usual hours of activity...Chaos Monkey is one of our most effective tools to improve the quality of our services.” - Netflix Chaos Monkey Upgraded

The origin of the name “Chaos Monkey” is explained in Antonio Garcia Martinez's book. “Chaos Monkeys.”

“In order to understand both the function and the name of the chaos monkey, imagine the following: a chimpanzee rampaging through a data center, one of the air-conditioned warehouses of blinking machines that power everything from Google to Facebook. He yanks cables here, smashes a box there, and generally tears up the place. The software chaos monkey does a virtual version of the same, shutting down random machines and processes at unexpected times. The challenge is to have your particular service — Facebook messaging, Google’s Gmail, your startup’s blog, whatever — survive the monkey’s depredations.”

The Chaos Monkey's role is to randomly disable instances and services in a company's architecture, with the underlying philosophy that every system should thrive despite any adversity. A distributed system is intentionally designed to anticipate and withstand failures from other systems it depends upon.

Building upon the success of Chaos Monkey, Netflix developed an extensive suite of tools known as the Simian Army. This arsenal is designed to simulate and evaluate responses to various system failures and extreme scenarios.

Latency Monkey introduces artificial delays in the RESTful client-server communication layer to simulate service degradation. It verifies if upstream services respond appropriately, and by introducing significant delays, it can simulate node downtime or even the failure of an entire service without physically removing instances from service.

Conformity Monkey identifies instances that deviate from best practices and disables them.

Security Monkey, an extension of Conformity Monkey, discovers security violations or vulnerabilities and shuts down instances violating security policies. It also ensures the validity of SSL certificates and digital rights management (DRM) without overdue renewals.

Doctor Monkey uses health checks on each instance, monitoring external signs of health (e.g., CPU load) to detect unhealthy instances. Once identified, unhealthy instances are removed from service and eventually shut down after service owners have had time to resolve the issues.

The Janitor Monkey ensures a clutter-free cloud environment by identifying and removing unused resources.

10-18 Monkey (short for Localization-Internationalization, or l10n-i18n) detects configuration and runtime issues on instances serving customers in multiple geographic regions using different languages and character sets.

Chaos Gorilla is similar to Chaos Monkey, it simulates shutting down an entire Amazon availability zone. Netflix adheres to a rule distributing each service across three availability zones, and Chaos Gorilla validates compliance by shutting down zones to confirm that services continue working with only two available zones.

Chaos Kong can shut down an entire AWS region to ensure that all Netflix users can be served from any of its three regions. Large-scale tests are conducted every few weeks in production to validate system robustness.

This growing arsenal of Netflix simians continually tests resilience against various failures, providing the company with confidence in its ability to handle inevitable failures and minimize or eliminate their impact on users.

How do dev teams use chaos testing and chaos engineering?

Before diving into chaos testing, it's crucial to assess whether chaos testing and engineering align with your organization and business needs. While chaos engineering proves effective in enhancing the robustness of large and intricate systems — offering benefits like quicker incident response and fewer unplanned downtimes — it may not be suitable for smaller systems and desktop applications.

Since we ‘re talking about production data and real service disruptions, it’s important to make sure that no one is harmed by the testing. Internal users, such as analytics professionals who rely on current data, or customer service experts who will have to deal with service disruptions must be kept in mind.

Typically, an experienced QA specialist leads chaos engineering efforts. They define various test scenarios, execute tests, and monitor results to ensure minimal harm to customers. A seasoned tester knows when to halt experiments if the situation becomes uncontrollable.

For those new to chaos testing, starting with small-scale experiments focused on a single microservice, container, or compute engine is advisable. Scaling up these experiments gradually minimizes potential side effects and allows for the fine-tuning of chaos testing capabilities.

Chaos tests are often viewed as experiments where the unknown is introduced into the system, and the reaction is observed. These experiments should have specific goals, such as assessing how the system responds to a missing component or measuring the impact of increased network latency. Conducting small and targeted experiments helps limit customer impact in the event of failures.

Here is a simple example of a chaotic experiment procedure.

Hypothesis: In the event of a sudden increase in incoming traffic, the system should dynamically scale its resources to handle the load without significant performance degradation. Auto-scaling mechanisms should trigger, ensuring optimal resource allocation.

Safe Experiment: Simulate a traffic surge by artificially increasing requests to the system within a controlled test environment. Observe whether auto-scaling mechanisms respond as expected, allowing the system to adapt to increased load while maintaining stability. Terminate the simulated traffic promptly if issues arise.

Adopting structured procedures, like the one outlined above, ensures safe chaos engineering under controlled conditions. Despite intentionally disrupting elements in production, chaos testing should never lead to incidents prolonged enough for customers to notice and complain. Make sure you have the ability to quickly rollback any changes.

Successful chaos testing relies on seamless collaboration between DevOps and QA testing teams. The DevOps team possesses recovery skills necessary for restoring production servers to normal, while testers disrupt internal and hardware connections to assess the impact. Coordination between development, deployment, and support teams is essential for optimal chaotic testing.

Additionally, running tests during non-peak hours helps minimize the impact on customer operations, preserving brand reputation. By leveraging the capabilities of both DevOps and QA teams, chaos testing becomes a valuable tool for enhancing system resilience without compromising customer experience.

Types of chaos engineering experiments

The application of chaos engineering can range from straightforward manual actions — like running “kill -9” in a test environment to simulate service failure — to more intricate automated experiments in a production environment with a small but statistically significant portion of live traffic.

Examples of experiments include:

Fault injection

- Objective: introduce faults or errors deliberately to observe how the system responds.

- Example: inject faults into specific components, such as network packet loss, data corruption during transmission, or simulating hardware failures.

Shutdown

- Objective: simulate the sudden unavailability of system components or services.

- Example: initiate controlled shutdowns of servers, databases, or critical services to assess the system's ability to handle and recover from unexpected outages.

Code injection

- Objective: inject custom code into the system to observe its impact on functionality and stability.

- Example: dynamically inject code snippets or scripts into running processes to simulate unexpected behavior or introduce controlled failures.

Latency injection

- Objective: simulate increased network latency to observe how the system performs under slower communication conditions.

- Example: introduce delays in network requests to mimic high-latency connections.

Random server outages

- Objective: test how the system responds when one or more servers unexpectedly go offline.

- Example: temporarily shut down random servers and observe the impact on system stability.

Resource exhaustion

- Objective: evaluate system behavior under conditions of resource constraints, such as high CPU or memory usage.

- Example: increase resource utilization to levels that approach or exceed system capacity.

Simulated DDoS attacks

- Objective: assess how the system handles increased traffic loads and potential denial-of-service (DDoS) scenarios.

- Example: generate a controlled influx of traffic to simulate a DDoS attack.

Database failures

- Objective: test the system's response to database outages or performance degradation.

- Example: intentionally disrupt database connections or introduce slow queries.

Dependency failures

- Objective: evaluate how the system copes with failures in external dependencies, such as APIs or third-party services.

- Example: temporarily disable or simulate errors in interactions with dependent services.

Configuration changes

- Objective: test the system's ability to adapt to changes in configuration settings.

- Example: dynamically modify configuration parameters and observe the impact on the system.

Disk space exhaustion

- Objective: assess how the system behaves when facing low disk space scenarios.

- Example: fill up disk space to trigger alerts and observe system response.

It's crucial to conduct chaos engineering experiments thoughtfully and with proper precautions to avoid unintended negative consequences. The primary goal is to uncover weaknesses in a controlled manner and enhance the overall resilience of the system.

Advantages and disadvantages of chaos testing

Advantages

Identifying potential points of failure. Chaos testing allows IT and DevOps teams to quickly identify and resolve issues that may go unnoticed with other types of testing. Simulating various failure scenarios provides valuable insights into system responses.

Improved application performance monitoring. Regular chaotic testing instills confidence in distributed systems, ensuring optimal application performance during major failures. Proactive and ongoing testing reduces the likelihood of unplanned downtime.

Enhances the integrity, resilience, and reliability of systems, leading to improved user satisfaction.

Improved response time. By testing failure scenarios and monitoring system response times, teams can identify areas for improvement. This minimizes downtime and ensures quick recovery.

Reduced risk of data loss. Deliberate testing for failures helps teams identify potential data loss scenarios, allowing them to implement measures such as regular backups, disaster recovery plans, and other data protection mechanisms.

Innovative nature. Chaos testing helps identify flaws in software design and architecture, fostering innovation by providing insights to enhance existing components.

Limitations and challenges

Suitability for small systems. Chaos testing may not be suitable for small systems, desktop software, or applications that are not critical to business operations, where downtime is acceptable if fixed by the end of the day.

Unnecessary damage. There is a risk of chaos testing leading to real losses exceeding acceptable norms. Controlling the blast radius is crucial to limit the costs of detecting vulnerabilities.

Incomplete observability. Without comprehensive observability, distinguishing important and non-critical dependencies becomes challenging, hindering the identification of root causes.

Unclear hypothesis. Chaos testing requires a clear understanding of the normal system functioning and expected responses. Ambiguous results may arise without a well-formulated hypothesis and model.

Increased testing volume. Chaos testing involves unpredictable scenarios, leading to more testing of edge cases. Managing and executing these scenarios can be time-consuming.

Complexity of the test environment. Testing system resilience under unpredictable conditions complicates the accurate measurement of results. Distinguishing between weaknesses in system architecture and unexpected events can be challenging.

Complexity in measuring results. Testing system resilience under unpredictable conditions can make it difficult to accurately measure test results. It is not always easy to determine if a failure was caused by a weakness in system architecture or design or if it was just an unexpected event that could not have been anticipated.

Cost. Chaos testing may increase testing costs due to the need for a sophisticated test environment, specialized knowledge, ongoing maintenance, and infrastructure upgrades.

Chaos testing, while offering significant advantages, requires careful consideration and planning to mitigate potential challenges and ensure effective implementation.

Chaos engineering as an exploration of the unknown

Regular testing and chaos engineering serve distinct purposes in the software development lifecycle. Traditional testing aims to verify if a program works properly, often confirming expected behaviors. On the other hand, chaos engineering is an experimental approach that generates new knowledge by formulating and testing hypotheses.

Chaos experiments provide two possible outcomes: either increasing confidence in existing assumptions or uncovering new capabilities of the system. In the face of a refuted hypothesis, teams delve into investigations to understand why, leading to a deeper exploration of the unknown.

While chaos testing is highly effective, it cannot prevent all production incidents. However, its main advantage lies in providing a profound understanding of failure points in a system. This insight is invaluable for building fault tolerance, implementing safeguards, and enhancing incident response.

Chaos testing fosters a mindset of defensive coding, promoting awareness of vulnerabilities within an organization. This continuous improvement cycle leads to a net increase in software reliability over time. The goal of chaos is not to entirely prevent failures but to enable early resolution of identified issues and better preparedness for assessing the likely causes of those that persist.

In essence, chaos engineering is a strategic exploration of the unknown, aimed at gaining insights, fostering resilience, and continuously improving software reliability. The practice encourages a proactive approach to system robustness and contributes to a culture of learning and innovation within development teams.

This curated list of awesome chaos engineering resources contains a wealth of additional information on chaos testing.

Related Posts

You might also like

Quality Assurance

Quality is not a separate Team. It's an Engineering discipline. And it's failing!

Nitin Deshmukh

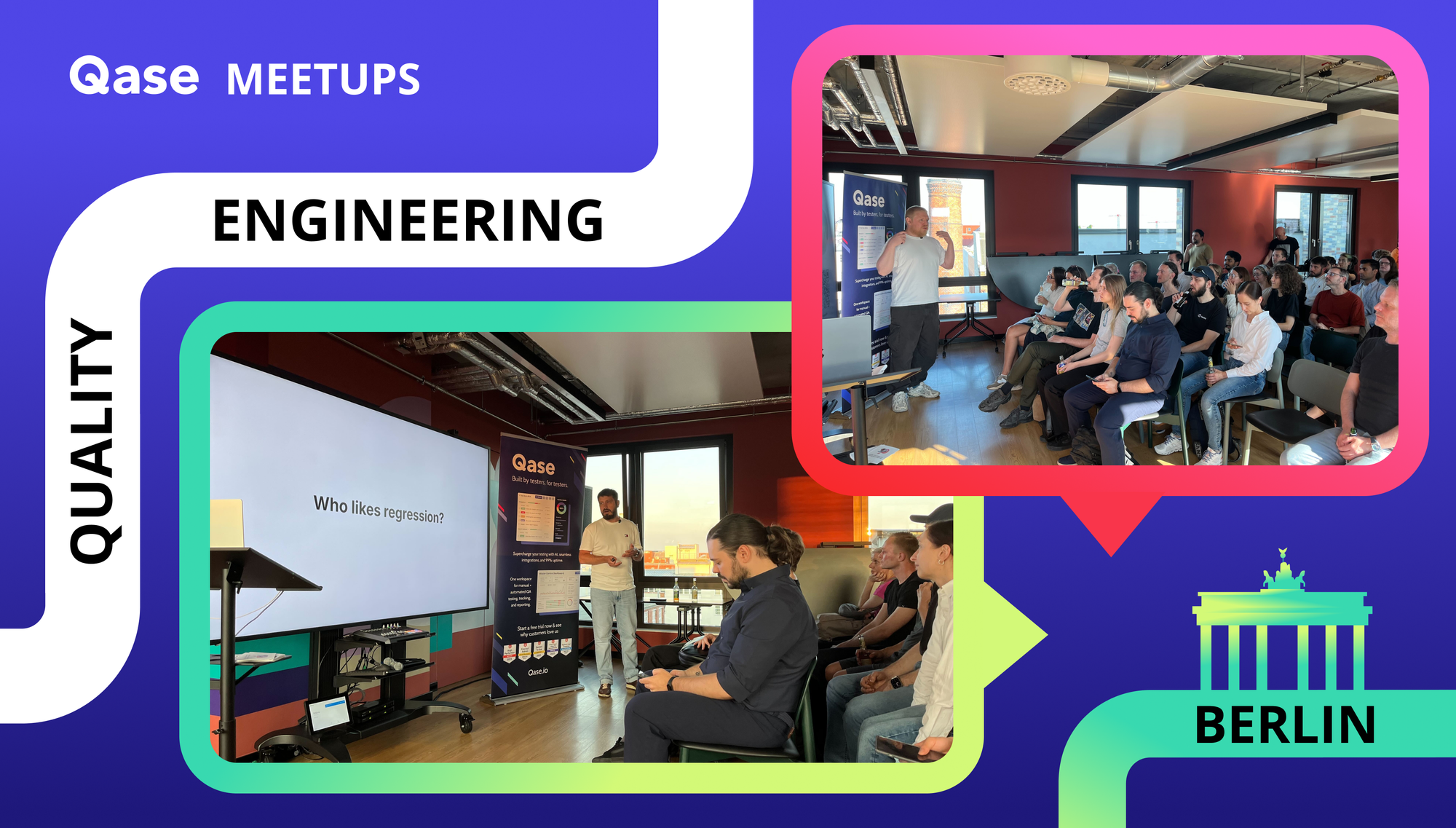

Quality Assurance

Recap: 7th Berlin QA Meetup – Lessons from Trade Republic, JetBrains, and InDrive

Vitaly Sharovatov

Quality Assurance

What is compliance testing and why is it important?

Sam Hollis