Gerald Weinberg, author of the classics The Psychology of Computer Programming and Introduction to General Systems Thinking, once quipped, “If builders built buildings the way programmers wrote programs, then the first woodpecker that came along would destroy civilization.”

Decades later, in 2009, Jeff Atwood, founder of Stack Overflow, wrote, “I've been unhappy with every single piece of software I've ever released.” By the end of the development cycle, he writes, “you end up with software that is a pale shadow of the shining, glorious monument to software engineering that you envisioned when you started.”

And Tim Bray, a former distinguished engineer at AWS, shared a similar sentiment while reflecting on the first two decades of his programming career, writing, “Back then, it seemed that for any piece of software I wrote, after a couple of years I started hating it, because it became increasingly brittle and terrifying.”

But Bray saw a light at the end of the tunnel and chased it: Testing.

Testing – and testing culture more broadly — is, according to Bray, the “biggest single contributor to improved software in [his] lifetime.” Bray saw what it was like to develop before testing culture — when developers just threw code over the wall at QA testers and IT teams – and his conclusion is direct: “The way we do things now is better.” And, alluding to the Weinberg quote, he writes, “Civilization need not fear woodpeckers.”

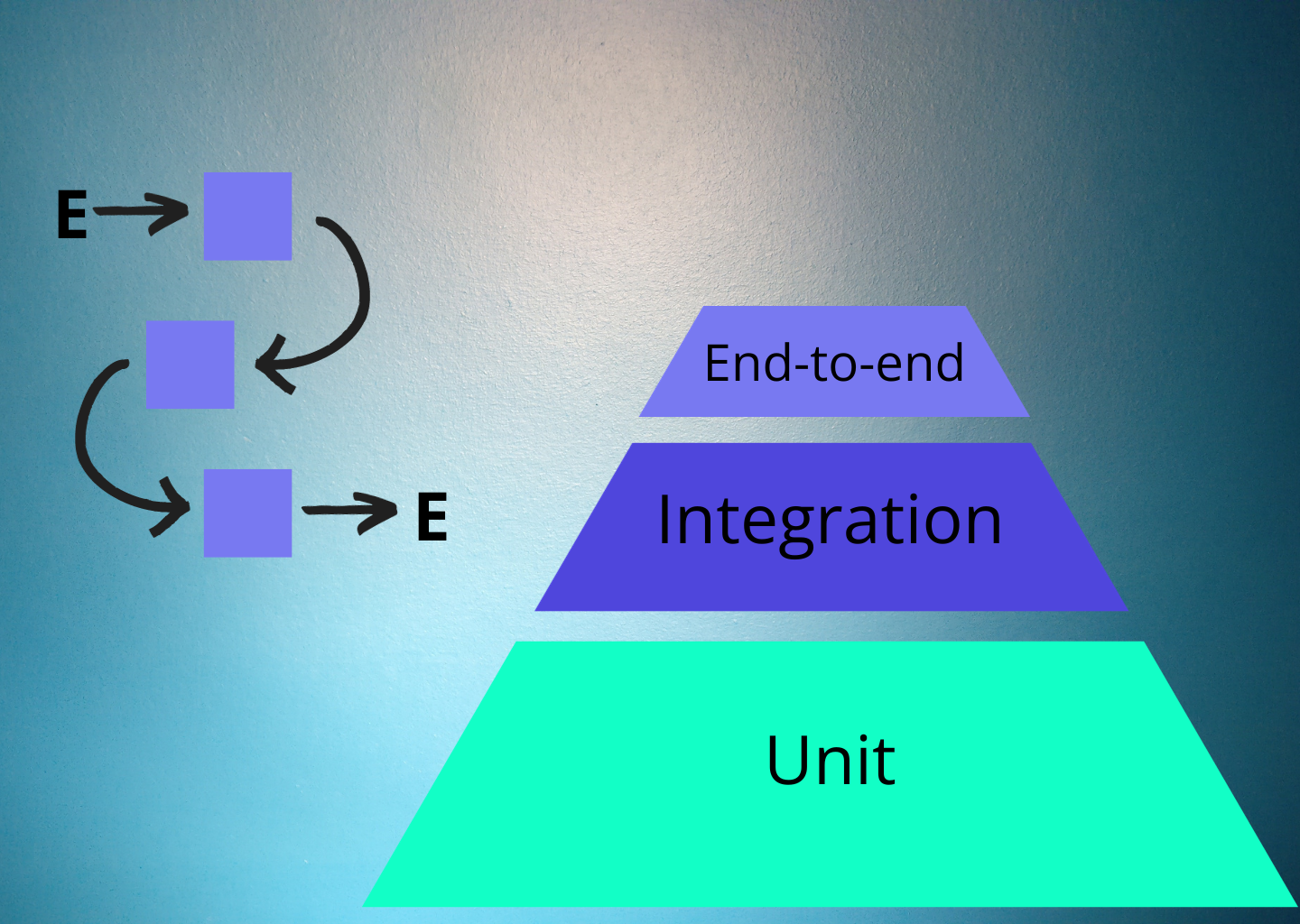

Testing existed prior to testing culture as we know it today, but testing today is led by developers and tends to take one of three forms: unit testing, integration testing, and end-to-end testing. All three forms of testing have become canonical, but the third, end-to-end testing, is frequently the least understood.

It’s end-to-end testing, however, that makes an application most resilient to woodpeckers (and worse), so developers who care about testing need to understand what end-to-end testing is and how it supports the rest of your test suite.

What is end-to-end testing?

End-to-end testing — sometimes called broad stack testing — is a form of software testing that focuses on how (and how well) a software application functions from beginning to end. End-to-end tests, typically performed less frequently than unit tests and integration tests, test the functionality of every process, component, and interaction and how they all weave together in a given workflow.

End-to-end tests typically take on the perspective of an end-user. By simulating how an end-user would use an application, developers can find any defects or bugs that might not have been caught in previous tests. By performing these tests after other, smaller tests, developers can look for errors that might arise from how the entire application fits together.

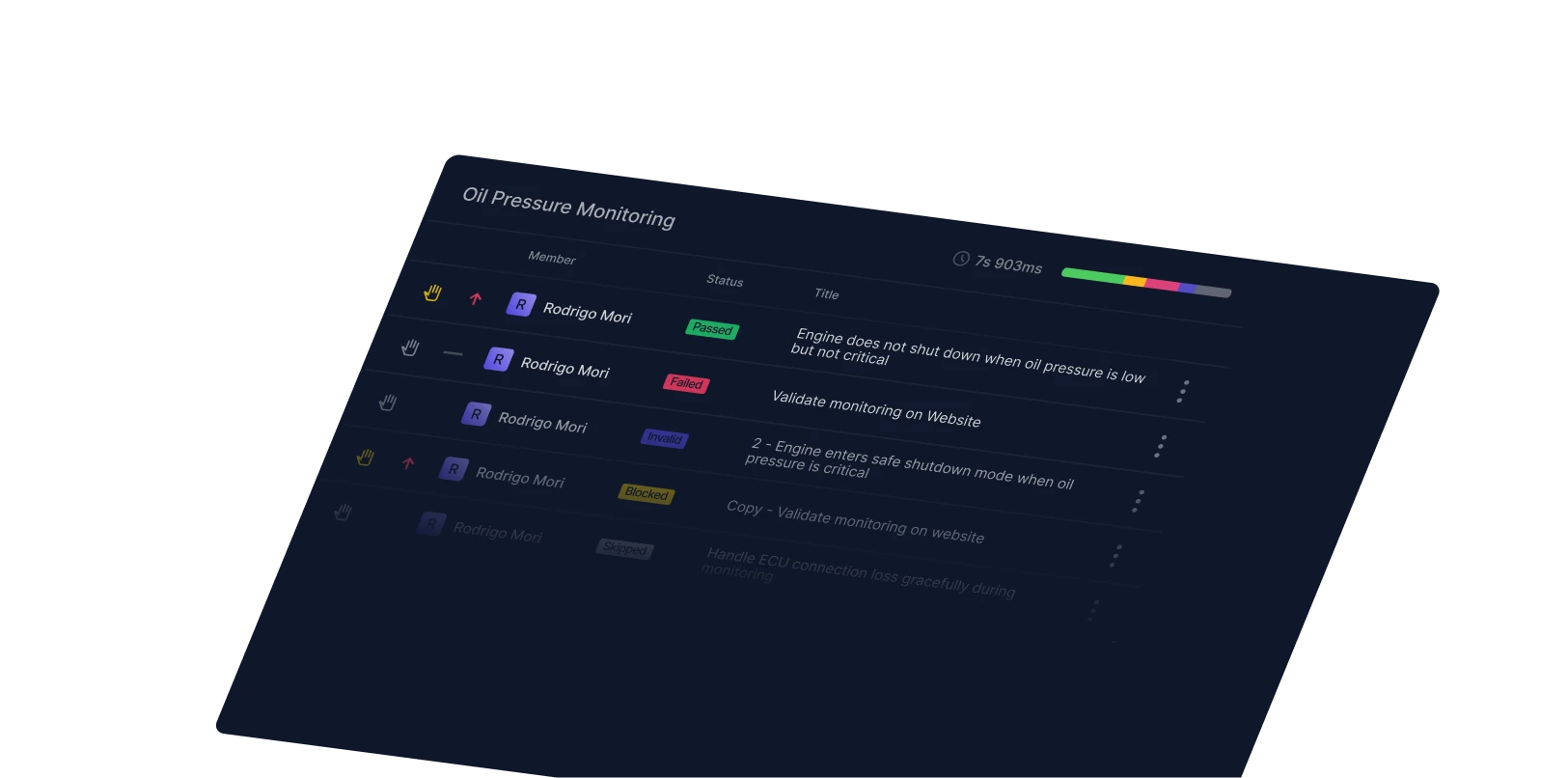

E2E testing is the final part of the testing pyramid

The testing pyramid — introduced by Mike Cohn in the book Succeeding with Agile and popularized by Martin Fowler on his blog — is a framework that helps developers visualize the testing suite as a whole and, broadly, what proportion of each type of test they need.

The testing pyramid takes its shape from the proportionality of tests it recommends: Many unit tests (which check whether a function or class works as intended), fewer integration tests (which check how components of the system integrate and work together), and even fewer end-to-end tests (which test how the whole application works).

More than a decade after Cohn introduced the test pyramid, the basic structure has become standardized — albeit with a few exceptions and confusions.

The line between end-to-end tests and integration tests, for example, is often confusing. Chris Coyier, cofounder of CodePen, clarifies this confusion by reframing the testing pyramid as a spectrum, with unit tests on one side and end-to-end tests on the other. End-to-end tests, he writes, are big, beefy, and sometimes flaky; integration tests check “a limited set of multiple systems.”

The pyramid framework leads developers away from making a few errors, especially with regard to end-to-end testing. Adam Bender, principal engineer at Google, for example, shares two types of errors: An ice cream cone antipattern happens when developers write too many end-to-end tests, and an hourglass antipattern happens when developers write too few integration tests.

Test suite antipatterns (Source)

As Bender writes, the first antipattern results in test suites that “tend to be slow, unreliable, and difficult to work with.” According to Bender, the second antipattern isn’t as bad, but “it still results in many end-to-end test failures that could have been caught quicker and more easily with a suite of medium-scope tests.” When developers rush prototype projects to production, the first antipattern is likely; when systems are so tightly coupled that it’s hard to isolate dependencies, the second is more likely.

There are also a few who quibble with the testing pyramid, such as the Spotify team, which instead proposes a “testing honeycomb.”

Testing Honeycomb (Source)

To avoid getting too deep into this discussion, keep Bray’s testing advice in mind: “There’s room for argument here, none for dogma.” In other words, the most important thing is testing, the second most is testing across all three categories, and the least important thing is arguing in strict terms about the “right” balance and approach.

Types of e2e testing (with examples)

There are three primary types of e2e tests: vertical, horizontal, and automatic.

The first two types of e2e tests are manual. Horizontal e2e tests assume the perspective of an end user navigating through the functionalities and workflows of the application. Horizontal end-to-end testing encompasses the entire application, so developers need to have well-built and well-defined workflows to perform these tests well.

For example, a horizontal e2e test might test a user interface, a database, and an integration with a messaging tool, such as Slack. Testers will run through this whole workflow as users and look for errors, bugs, and other issues.

Vertical end-to-end tests check the functionality of an application layer by layer — typically working in a linear, hierarchical order. Testers break applications into disparate layers that they can test individually and in isolation. For example, testers might focus on particular subsystems, such as API requests and calls to a particular database, to see whether those subsystems are working as intended.

Automatic e2e testing, in contrast to both previous testing types, typically involves tests programmed to reflect the manual tests that are then integrated into an automated testing tool and/or CI/CD pipeline.

Choosing when to shift from manual testing to automatic isn’t always an obvious decision. Unlike unit tests, which many agree should be automated, e2e tests often benefit from a human touch, and this manual involvement is sometimes surprisingly practical because e2e tests should be run much more rarely than unit tests.

Advantages and disadvantages of e2e testing

Back in 2015, the Google testing blog wrote a post called “Just Say No to More End-to-End Tests” that stirred up some controversy.

Among the many responses and arguments was an article from Adrian Sutton, formerly lead developer at LMAX. In the response, Sutton argues that the Google article “winds up being a rather depressing evaluation of the testing capabilities, culture and infrastructure at Google” rather than a substantial takedown of end-to-end testing.

The details of the debate are interesting, but the most important takeaway is the throughline that emerged: End-to-end testing lives or dies based on how well an individual organization or team runs it. Almost all of the advantages and disadvantages of e2e testing come from how well the developers and testers design and implement the testing suite.

For example, in the ice cream cone antipattern mentioned above, the problem isn’t the e2e tests themselves; it’s the proportion of e2e tests to other tests. Martin Fowler argues that e2e tests are best thought of as a “second line of test defense.” A failure in an e2e test, he writes, shows that you have, one, a bug and, two, an issue in your unit tests.

Even when e2e tests aren’t working well, the upstream cause might lie beyond the e2e tests themselves. Research from Jez Humble, author of Accelerate: The Science of Lean Software and DevOps, for example, shows that having tests “primarily created and maintained either by QA or an outsourced party is not correlated with IT performance.”

Humble’s theory, he explains, is that code is more testable when developers write the tests and that when developers are more responsible for tests, they tend to invest more time and energy into writing and maintaining them.

Of course, if you ask developers what annoys them about e2e tests — or any test, really — they’re likely to complain about flaky tests.

In a paper called The Developer Experience of Flaky Tests, researchers found that over half of developers experience flaky tests every month or more. The researchers even asked respondents whether they agreed with a definition of “flaky,” which they defined as “a test case that can both pass and fail without any changes to the code under test” (more than 90% agreed with this definition).

One of the most notable findings — beyond the all too common experience of developers struggling with flaky tests — is that developers “who experience flaky tests more often may be more likely to ignore potentially genuine test failures.” The researchers further found that the more often that developers experience flaky tests, the more likely they are to take no action in response to those tests.

All in all, the advantages and disadvantages of e2e testing tend to be very context-dependent. For example, Sutton reports that even though building a testing process for end-to-end testing took a lot of time and effort, he found that the results were “invaluable” because the tests freed the LMAX team to “try daring things and make sweeping changes, confident that if anything is broken it will be caught.”

But if a testing suite isn’t well designed, e2e tests can be slow, flaky, and altogether burdensome.

How to write e2e test cases

Different teams will have different approaches and methods for writing e2e tests, but most tend to involve five steps: Identifying what you want to test, breaking the test scenario down into discrete steps, following the steps manually, writing a test that can perform the manual steps automatically, and integrating the automated test into a CI/CD pipeline.

Identifying what you want to test — whether it be a vertical or horizontal test — often involves cross-departmental collaboration among product managers, developers, and testers.

After you identify a range of scenarios you want to test, you can break each scenario down into a series of steps. Here, you’ll want to be as specific as possible and include the expected results of each step.

Many teams, especially if the product is new or relatively simple, can stop here. Developers and testers can assume the perspective of end users and run through the steps they identified – noting any issues along the way. But at large enough scales, in size or complexity, teams will likely want to automate these steps, which usually requires using an automated testing tool.

With the right tool adopted and the manual tests made automatic, teams can then integrate the tool and its tests into the CI/CD pipeline.

Throughout, developers will have to think through the scope of each test and the bugs they’re looking to identify with each test.

Scope needs to be thought through carefully. Testing every single way all real users could interact with an application would provide a lot of test coverage, but the process would be onerous, and the results unlikely to be worth the cost. Testing too minimally is also a mistake because it can fool teams into thinking an application is well-tested when it isn’t.

Bugs, an object of all testing types, need to be well-targeted. If a testing suite is well designed, unit tests will identify errors in business logic, whereas e2e tests will focus on the functionality of different integrations and workflows. Bugs in this stage should arise as a result of interactions between application components and as a result of emergent, system-level behavior that can’t be predicted at the unit level.

Ultimately, the best e2e tests will be a result of iteration either from the development and testing teams themselves or as a result of lessons from other teams. Often, the latter lessons can provide time-saving shortcuts, allowing teams to skip past mistakes they otherwise would have made.

The LMAX team, for example, considers the below factors fundamental to effective e2e testing. End-to-end tests, Sutton writes, should:

- Run constantly through the day

- Complete in around 50 minutes, including deploying and starting the servers, running all the tests, and shutting down at the end

- Be required to pass before a version can be considered releasable

- Be owned by the whole team, including testers, developers, and business analysts

Beyond these basics, Sutton writes that the LMAX team tries to ensure that:

- Test results are stored in a database to make it easy to query and search

- Tests are isolated so they can run them in parallel to speed things up

- Tests are dynamically distributed across a pool of servers

End-to-end testing, more than other testing types, depends on how well it fits into the rest of your testing suite. At all times, think about how problems might be flowing downstream through other tests and think about how the sum of your testing suite works instead of focusing too narrowly on how well any given test type functions.

Testing builds courage

Developers rarely consider testing an exciting part of the process. Many developers have to be persuaded and incentivized to write and run tests (the Google testing on the toilet program comes to mind), but developers will likely feel most motivated to prioritize testing when they can feel the results.

As Sutton said, end-to-end tests allowed his team to “try daring things and make sweeping changes,” and Bray concurs, writing that working in a well-tested codebase “gives developers courage.”

Modern software, especially at production-grade and enterprise-scale, can’t rely on sheer coding. As Bray says, “Yes, you could use a public toilet and not wash your hands. Yes, you could eat spaghetti with your fingers. But responsible adults just don’t do those things. Nor do they ship untested code.”

Related Posts

You might also like