Quality Management

AI effect on quality, or the epistemological crisis

Vitaly Sharovatov

The recent explosion of articles about ChatGPT has made it difficult to avoid the news.

Initially, there were many positive posts:

- I tried ChatGPT from OpenAI and my mind was blown

- AI bot ChatGPT stuns academics with essay-writing skills and usability

- Rise of the bots: ‘Scary’ AI ChatGPT could eliminate Google within 2 years

- ChatGPT takes Twitter by storm

Then criticism started to appear:

- ChatGPT Is Dumber Than You Think

- The ChatGPT chatbot from OpenAI is amazing, creative, and totally wrong

- AI is finally good at stuff, and that’s a problem

- ChatGPT, Galactica, and the Progress Trap

Some sites, like StackOverflow, have even banned the use of ChatGPT.

The main reason for this criticism and ban is that ChatGPT lacks an ontological understanding of the subject matter it is writing about and can often produce ignorant and mistaken texts.

ChatGPT CEO Sam Altman confirms this:

ChatGPT is incredibly limited, but good enough at some things to create a misleading impression of greatness.

it's a mistake to be relying on it for anything important right now. it’s a preview of progress; we have lots of work to do on robustness and truthfulness.

The dangerous combination of confidence and ignorance is particularly evident in ChatGPT's outputs. When people don't know much about a topic, they are less likely to be able to tell if the generated text is accurate.

Human cognition is prone to various biases, and the "authority bias" is particularly relevant in the case of ChatGPT. Due to a growing PR, many non-experts may consider ChatGPT an authority on various topics. This can lead to people accepting the output of ChatGPT without questioning its accuracy. Tweets claiming that "google is dead" or that "there's no more need for wikipedia" are already circulating, demonstrating the dangers of blindly accepting the output of ChatGPT.

However, even if ChatGPT was right in most cases, its use poses an epistemological threat to society and companies and individuals employing it..

Acquiring professional knowledge requires explicit learning and practice. For example, writing an essay requires understanding the topic and formulating one’s thoughts, while doing homework requires recalling and practicing what was learned in class. Similarly, solving an engineering problem requires synthesis, and the engineering skill improves with every problem solved.

Midjourney's famous hands hallucination.

Homo sapiens learn by doing.

Using ChatGPT removes the learning process from activities like writing essays, doing homework, or solving problems, as it takes minimal effort to complete these tasks. This means that the person is not actively learning and improving their skills. Instead, they are relying on ChatGPT to do the work for them. While this may be convenient in the short term, it ultimately hinders the person's ability to learn and improve.

Learning allows people to create new things and solve new problems. As people rely more on ChatGPT, they learn less and are less able to solve new problems or create new information. This leads to a decrease in the amount of information available for training ChatGPT, creating a self-reinforcing cycle that slows down progress.

If ChatGPT is the only tool used to write text or code in the next ten years, it is likely that the world's knowledge will stagnate.

It is a vicious epistemological circle starting right now. Moreover, keeping people from using it is challenging: one will be instantly called a Luddite. After all, getting any text done in no time is so lovely.

With the world’s knowledge stagnating, so will the human ability to solve problems. Porcelain will be added to breast milk.

References:

Related Posts

You might also like

Quality Management

Building a continuous learning mindset as a QA professional

Vitaly Sharovatov

Quality Management

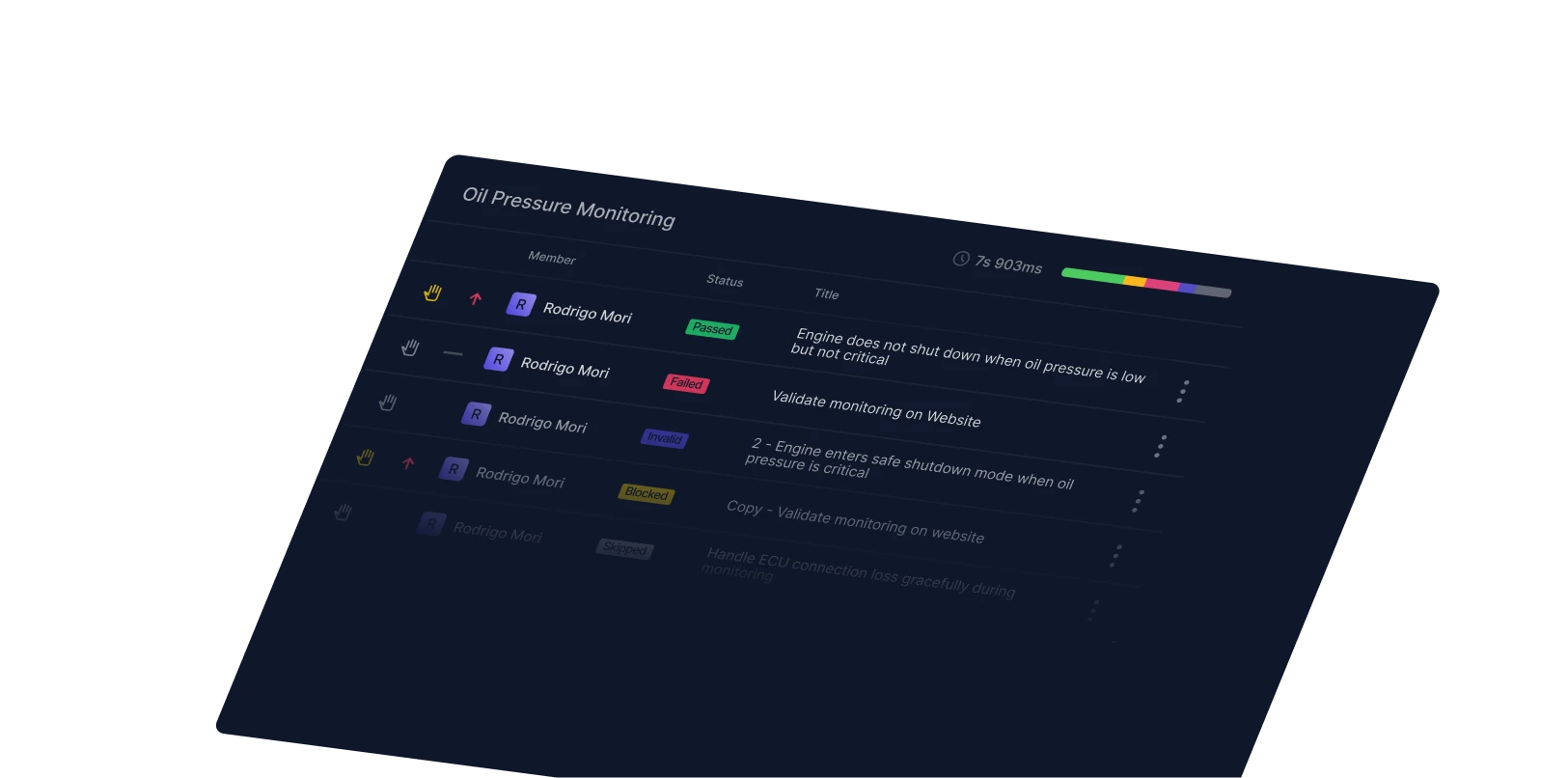

Don’t put off traceability until you desperately need it

Qase Team

Quality Management

Total Quality Management in software development and QA

Vitaly Sharovatov