Development

Stop doing code reviews and try these alternatives

Vitaly Sharovatov

Code review is a standard part of the software development process in many companies, but in many cases performing code reviews is inefficient or even illogical.

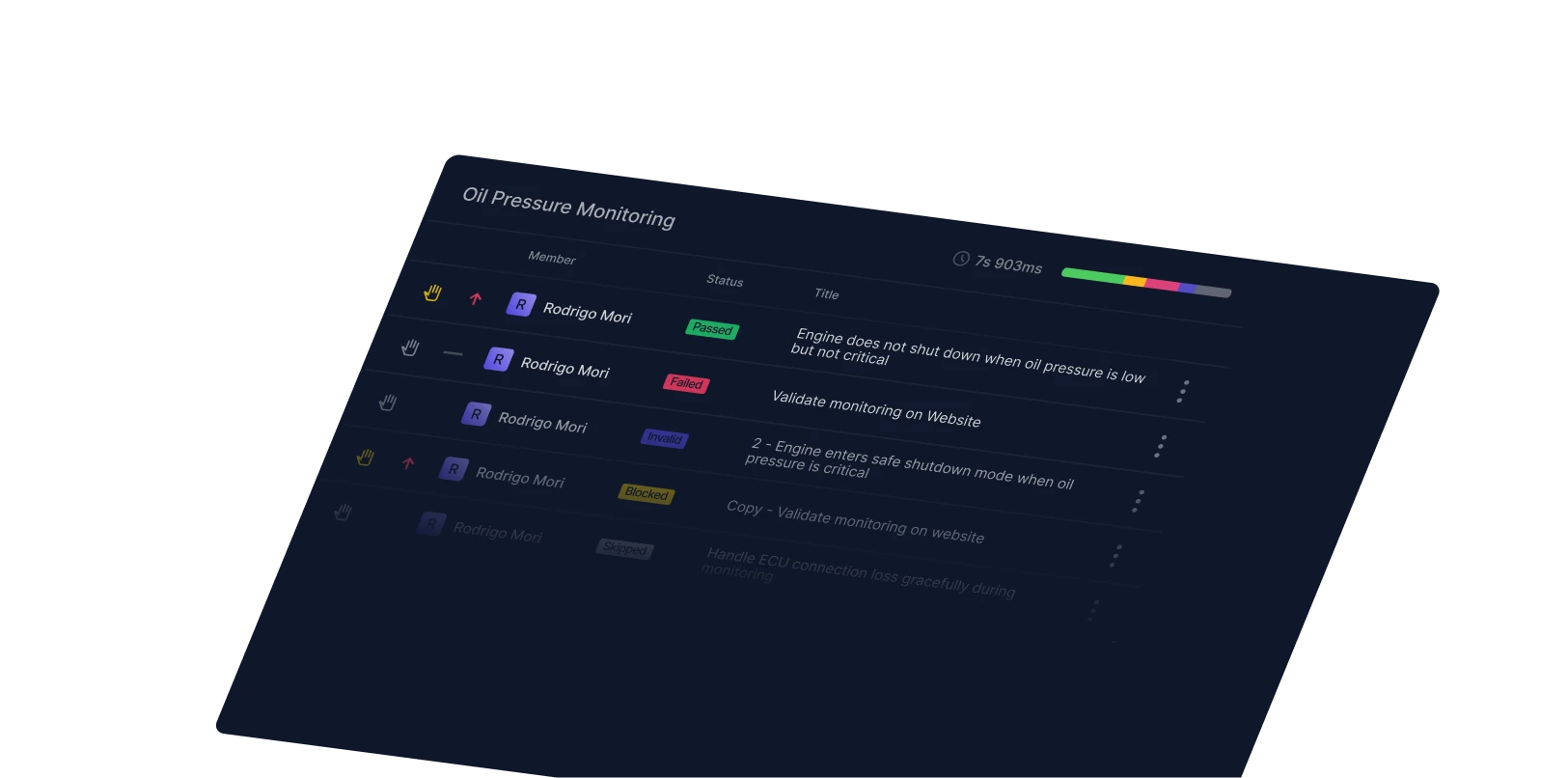

For this article, I’ll focus on only pull-request-based code reviews. With pull-request code reviews, a developer works on some code and then sends the code change to a peer for review in a pull-request. In these cases, the code under review will not be passed to the testing phase until it has been approved by the reviewer — so the pull request is blocked by the review process.

The primary objective of blocking pull-request-based code review is to prevent bad code or defects from being pushed to production. However, another important goal of any code review is knowledge sharing. The idea is that once a reviewer reads the code, they will have a better understanding of it.

When comparing different tools or processes, it’s important to consider their effectiveness in terms of both price and value. Price refers to the cost of implementing the tool and/or process, while value refers to how well the tool and/or process accomplishes its intended goal. Weighing these factors can help determine which option is ideal for your situation.

The cost of a code review goes beyond actual money spent

Code reviews don’t just cost you in terms of tool subscriptions and the hours spent reviewing code. They also come at the expense of delayed code, increased amounts of context switching, and negative effects on social dynamics within your team.

Delayed code

Google, Meta, Microsoft and Gitlab publish a lot on their code review processes, and they all point out the obvious: code reviews delay the development.

In many companies, code reviews can take up to 20 or even 30% of the development time. Any delay introduces the cost of delay: “the economic impact of a delay in project delivery.”

Often, features are built because there is a hypothesis that the delivered functionality will benefit the users and provide value for them. However, even if the hypothesis is correct — which isn’t always the case — the longer it takes to deliver the feature, the greater the cost of delay to the company.

To understand the direct cost of delay that your team pays, try measuring the code review "cycle time,” which is the total time from when the code was submitted for review to when the review was completed. Additionally, if your team develops product ideas (features and epics) that have projected value, calculate the cost of delay that code reviews have incurred over the past several months.

Context switching

Companies sometimes require code reviews to occur within a certain period of time, such as within 1 business day or in under 4 hours. Some companies even reserve specific times of the day for code reviews. Both approaches require reviewers to switch contexts from their current task to perform the review and then switch back, potentially multiple times if the review requires multiple steps.

Several publications (1, 2, 3, 4, 5) on multitasking and context switching have shown that it's more effective to work on one task at a time with as few context switches as possible. According to studies, it takes an average of 23 minutes to switch contexts. If you multiply the number of code reviews a person does during a month and double it (to account for switching back to other work), you'll get the overall time spent just on switching tasks. Even if a reviewer only stops once a day to do code reviews, that’s nearly an hour of work lost due to context switching.

Social dynamics

Every human being associates the results of their work with themselves. Valuing something we put effort into is innate to our nature. According to the Self-Determination Theory by Deci and Ryan, people have innate psychological needs for competence, autonomy, and relatedness. Work often provides an avenue to fulfill these needs, especially the need for competence. When people receive positive feedback, it validates their sense of competence. Conversely, negative feedback or criticism can undermine this, leading to emotional distress.

The more effort a person puts into a task, the more vulnerable they become to criticism, especially if the criticism feels unfair.

The internet is full of posts and articles on how to "solve" this negativity problem. Most of these posts talk about providing more positive feedback or wrapping the criticism between two layers of positive feedback, known as the "sandwich technique." However, studies have shown that these approaches do not yield good results, and candid criticism is more preferable and useful.

Of course, candid criticism only works if the person being critiqued feels the criticism is fair. If the person didn’t know what was expected of them or how to properly perform the task in the first place, criticism will feel particularly unfair. This negative dynamic occurs when the team fails to agree upon a technical implementation before the reviewee begins the work.

Reviewers are also at risk of negative social dynamics. When a reviewer is asked to judge objectively if code is good or bad, they are essentially encouraged to identify imperfections. Arguments and conflicts in code reviews are unfortunately common in the industry and can be very detrimental to team morale and connection.

It’s not easy to measure the level of negativity brought about by these social dynamics, but it’s clear that the reviewee-reviewer relationship can be tense and negatively impact overall morale and productivity.

Code review is valuable in theory, but not always in practice

The value of code review stems from its goal of preventing bad code and defects from reaching production.

Preventing bad code or defects

A defect or a bug is defined as “an error, flaw or fault in the design, development, or operation of computer software that causes it to produce an incorrect or unexpected result, or to behave in unintended ways.”

Just reading this definition makes it obvious why multiple studies [1, 2] have shown that code review isn't usually effective in finding defects. Most reviewers simply read the code, rather than running it to find actual defects against the specification. People's minds aren't good compilers and it takes a full Quality Control (QC) cycle to test the code against the specification and confirm that it works with no defects. When someone just reads the code, they usually only catch the obvious low-level or logical issues, which are just a small proportion of bugs and defects. Furthermore, the larger the code change, the harder it is to closely review it.

How valuable is a minimal probability of finding low-level issues in the code that most linter tools can be configured to find automatically?

Of course, the reviewer can actually compile and run the code, perform quality control, and check the implemented feature against the specification, but this would take a significant amount of time, which is why the task is generally reserved for QC specialists rather than code reviewers.

Manual code reviews may still find defects in the code, but you shouldn’t assume it’s the most efficient way to identify issues. To see the defects found and fixed during your reviews, check the last few months of tasks and gather the statistics for yourself.

Knowledge sharing

Knowledge sharing is the activity of exchanging knowledge, which can be information, skills or expertise, among people.

In code reviews, the goal of knowledge sharing is achieved from two perspectives: the reviewer learns from the reviewee by reading the code, and the reviewee learns by submitting the code for review and receiving feedback.

Reviewer learning

The code produced for a task is the result of an intellectual endeavor involving problem analysis and solution synthesis. A reviewer who reads the code only sees the solution to a problem, not the analysis and synthesis that led to it.

Even if the code included the analysis and synthesis (as textbooks do), current scientific consensus suggests that distributed practice and practice testing are the most effective ways of acquiring new knowledge, while underlining and summarization while reading are the least effective. Simply reading code does not lead to much knowledge acquisition at all.

Reviewee learning

It’s often believed that a reviewee can learn something by receiving feedback on code submitted for review. If we followed that way of thinking in terms of education, students would be expected to submit homework without instruction and then learn from their teacher’s corrections. But a successful teaching process involves activities performed by both the teacher and the student:

- The teacher provides information, theoretical background, and guides the student through practical activities. The understanding of "how" is synchronized with the student before any solo practice is done.

- The student spends time practicing to apply the acquired knowledge and seeing it in action. This is where the acquired knowledge is solidified.

- The teacher verifies results, highlights mistakes, and provides additional information if needed. The teacher checks whether the student has acquired and used the knowledge correctly by comparing the practice results with the synchronized understanding that occurred in the first step.

- The student practices again. If there were mistakes on step 3, they are corrected, and the difference is minor.

The usual peer code review process skips the first essential step of providing the information and theory necessary for successful task completion. The process typically goes as follows:

- The student (reviewee) spends time practicing and completing the task.

- The teacher (reviewer) verifies the results and highlights mistakes.

- The student (reviewee) repeats the practice step.

In this process, the reviewer does not synchronize their understanding of "how" with the reviewee before the task is done. As a result, the practice step only reinforces existing knowledge and does not lead to new knowledge acquisition.

The third step is also flawed because there was no synchronization of understanding before the task was completed. If a lack of knowledge is found at this step, it has already been reinforced by the second practical step, making it ineffective to correct at this point. Again, this is like asking a student to solve a math problem before teaching them the formula to use and then expecting the student to figure out the formula through the teacher’s corrections.

In both the math equation and code review examples, the student/reviewee is working only with existing knowledge and is unlikely to gain new knowledge.

Determining the cost & value of code reviews — and exploring alternatives

Once the price for the code review practice is determined and its value is understood, it can be compared to other process activities that provide similar (or better) value.

In this review, I will be looking at pair programming and mob programming practices.

Pair programming price

Zero delay

Pair programming is inherently synchronous, with two engineers working on one problem simultaneously. Some might say that pair programming is a form of continuous code review, as issues are effectively prevented from appearing in the code. This eliminates the need for a separate review cycle after the code is written and the problem is solved.

With pair programming, the delay is zero, and the cost of delay is also zero.

Salary cost

Pair programming involves two engineers working on the same task, which might lead one to think that one task costs twice as much.

However, this is only partially true. The cost of production encompasses the entire process, from design and development to testing. Studies have proven that design and development with pair programming is faster. It makes perfect sense — two heads are better than one, problems are solved more quickly, solution quality is higher, and rework is minimized.

No context switching

Pair programming requires two programmers to focus on a single goal together, so there is no context switching. By eliminating context switching, pair programming increases productivity and reduces mental fatigue.

Pair programming has proven value

Unlike the hypothetical value of code reviews, the practice of pair programming has more tangible results. In fact, it offers many of the benefits that code review falls short on, including reduced bugs and improved knowledge sharing.

Preventing bad code or defects

Multiple studies (1, 2) have shown that pair programming leads to much better code quality and prevents defects from appearing.

The reason is simple: two peers analyze the problem and come up with a solution together, which makes it twice as likely that a defect will not appear in the resulting solution.

Knowledge sharing

Most studies on pair programming highlight its efficiency in knowledge sharing. This is not surprising: pair programming involves participants in active learning. Both participants are constantly engaged in the task, with the driver actively coding and the observer reviewing and thinking ahead. This is more effective than passive learning methods, such as watching a tutorial or reading documentation.

In addition, the observer provides immediate feedback to the driver, allowing for quick corrections and adjustments. This real-time feedback loop is crucial for learning and can help to internalize best practices.

In pair programming, both peers learn from each other all the time because they are not merely reading the code, but both participating in problem-solving and internalizing new knowledge by discussing and practicing it.

Software developer Ron Jeffries shared that during his first real pair programming session, he assumed he’d be teaching the young, less experienced developer. However, the young programmer started asking questions about Jeffries’s methods and teaching him.

“...Pretty soon, he was suggesting what I should do next, meanwhile calling out my every formatting error and syntax mistake… this most junior of programmers was actually helping me…Since then, that’s been my experience every time in pair programming. Having a partner makes me a better programmer.” - Ron Jefferies

Social dynamics

Compared to code review, pair programming creates fewer negative social dynamics. It’s seen as partner work where two engineers collaboratively solve problems. There is no criticism or judgment of the work result, as it is produced by both individuals working together.

An online survey of professional pair programmers revealed that 96% of respondents enjoy working in pairs more than programming alone. Moreover, several surveys showed that programmers felt more confident in their work when they pair programmed.

Mob programming offers similar value, but can come at a higher price

I’ve discussed mob programming benefits in a previous post. Here, I will compare it to code reviews and pair programming.

Like pair programming, mob programming doesn't involve any delays or costs associated with context switching or negative social dynamics.

However, the salary cost is much higher since the entire team works on only one task at a time. This increased cost may be offset by the improved knowledge sharing that comes with mob programming compared to pair programming.

Mob programming value

Preventing bad code or defects

Compared to pair programming, mob programming significantly reduces the number of defects as more individuals are collaborating simultaneously in solving the problem and writing the code.

Knowledge sharing

Knowledge sharing is where mob programming shines the most, as the entire team acquires the knowledge.

Social dynamics

Because mob programming involves the entire team, it creates a very positive, teamwork-focused environment. In fact, in my previous article, I suggest applying the mob programming practices to other types of work beyond coding, particularly tasks that require various disciplines. For example if you are working with writers, designers, and developers, tension can grow between teams due to lack of understanding of each others’ skills. All too often, department-centric work functions like code reviews — with negativity brewing as each department criticizes the others without any clear understanding of each department’s knowledge or goals for the project.

Mob programming may be pricier than pair programming in terms of salary cost, but for large and/or cross-department projects, it may yield the best results in terms of value, knowledge sharing, and social dynamics within your team.

Don’t default to code reviews

Working towards making processes more efficient is a worthwhile goal for any engineer. Often, processes like code reviews are carried out simply because they've been borrowed from another organization, or because no one has taken the time to assess their current relevance or effectiveness.

Through this article, my aim was to offer a framework for comparing different processes and offer alternatives to standard code review. I recommend that everyone gather data on the costs and value of each activity to determine its rationality and suitability for your specific situation.

References:

- Chapter 11 - Factors Critical to the Success of Knowledge Management

- The Knowledge-Creating Company

- A review of software Inspections, Porter, Siy, Votta, 1996

- Expectations, Outcomes, and Challenges of Modern Code Review, 2013

- Investigating technical and non-technical factors influencing modern code review, 2015

- Information Needs in Contemporary Code Review, Pascarella, Spadini, Palomba, Bruntink, Bachelli, 2018

- The Cost of Interrupted Work: More Speed and Stress, Mark, Gudith, Klocke, 2008

- The myth of multitasking, Nass, 2013

- Code Reviews Do Not Find Bugs. How the Current Code Review Best Practice Slows Us Down

- Modern code review: a case study at google, 2018

- Reconfiguration of task-set: Is it easier to switch to the weaker task? Psychological Research, Monsell, S., Yeung, N., & Azuma, R. (2000)

- Multitasking: Switching costs (american psychological association)

- Executive Control of Cognitive Processes in Task Switching — Joshua S. Rubinstein, David E. Meyer, Jeffrey E. Evans

- The correlation between organizational culture and knowledge conversion on corporate performance

- A Meta-Analysis of Ten Learning Techniques

- The Costs and Benefits of Pair Programming

- The effectiveness of pair programming: A meta-analysis

- Evaluating Effectiveness of Pair Programming as a Teaching Tool in Programming Courses

- Increasing Quality with Pair Programming - An Investigation of Using Pair Programming as a Quality Tool

- The Case for Collaborative Programming

- Strengthening the Case for Pair Programming

- Mob vs Pair: Comparing the two programming practices – a case study

- Mob Programming: A Qualitative Study from the Perspective of a Development Team

- Leveraging the Mob Mentality: An Experience Report on Mob Programming

Related Posts

You might also like

Development

Web development complexity is increasing, but is UX getting any better?

Vitaly Sharovatov

Development

What is acceptance criteria?

Rustam Sabirov

Development

A code review checklist for a more organized review process

Maggie Marshall