Software Quality

ChatGPT monetisation and ads / propaganda

Vitaly Sharovatov

Human civilization has a long history of inventions that have positively and negatively affected societies. For example, the invention of gazettes, the radio, television, and the internet has given millions of people unprecedented access to information and content.

Mobile phones and apps have expanded this access even further, allowing people to consume content on the go. However, as applications compete for users’ attention, they have started producing shorter and more engaging content, leading to the rise of platforms such as Facebook and Twitter, Instagram, and TikTok. Moreover, push notifications drag people between contexts all the time. Psychologists and pedagogues are alarmed by the growing influence of ‘clip thinking’:

[a process where] information is consumed fragmentarily in a short, vivid message embodied in the form of video clip or television news. ‘Clip thinking’ is considered as a process of reflecting a multitude of different objects, disregarding the connections between them, characterized by the complete heterogeneity of incoming information, the high speed of shifting between fragments of the information flow, the lack of the integrity of the perception of the surrounding world.

There is a trend towards shallow thinking and a decreased ability to concentrate on a specific topic for an extended time, which may reduce cognitive ability.

Some reports even say that people stopped reading on the web. The information is now scanned instead:

79 percent of our test users always scanned any new page they came across; only 16 percent read word-by-word

Scientists keep studying the effects of constant distraction brought by social media and the harmful effects of such distraction on performance.

Specialists in performance and time management had to develop specialised techniques like Pomodoro to reduce the negative effects of ‘clip thinking’ in a constantly distracting environment.

Had the inventors of TV or gazette publishers thought of the consequential adverse effects the progress would have on societies? Do companies like Facebook or Google care about their products’ negative effects on the world, or do they only focus on increasing revenue?

The previous article expresses concerns about epistemological effect of LLMs.

This post focuses on another concern of a potential negative effect of the progress: ChatGPT and other LLMs can have a powerful, yet unnoticeable and untraceable, influence on people's opinions, either for commercial or propaganda purposes.

ChatGPT is currently free, but its potential monetization raises ethical concerns.

The human mind is constantly changing and adapting to new experiences and information. Every interaction and conversation can alter one's attitudes and beliefs, strengthening or weakening them.

This ability to change can be used ethically to improve the person’s or society’s well-being.

In psychotherapy, reframing technique helps treat traumas:

Reframing as a counseling technique provides an alternative perspective relating to the perceptions of individuals in group counseling. If a constructive reframe is assimilated by a group member, the person's options for adaptive functioning are broadened in a therapeutic direction.

In sociology, deep canvassing technique helps overcome prejudice:

Existing research depicts intergroup prejudices as deeply ingrained, requiring intense intervention to lastingly reduce. Here, we show that a single approximately 10-minute conversation encouraging actively taking the perspective of others can markedly reduce prejudice for at least 3 months

Michele Belot and Guglielmo Briscese were researching what could trigger people to engage with others who think differently on key issues such as abortion laws, gun laws, or immigration:

We ... confirm Allport’s hypothesis that contact is effective in altering views when common grounds are emphasized, both when these are common views on human rights and on basic behavioral etiquette rules.

... take-away message from this paper is [that] most people are willing to listen to others with opposite views, and a small but crucial fraction of people is willing to change their views after listening to others they disagree with. Most importantly, overall views become less polarized after listening to others when common grounds are emphasized. These findings suggest that interventions aimed at bridging the partisan gap can be effective, particularly those that offer opportunities to voluntarily engage with others and emphasize common grounds.

Michele Belot and Guglielmo Briscese helped people change their minds to bridge the societal divide.

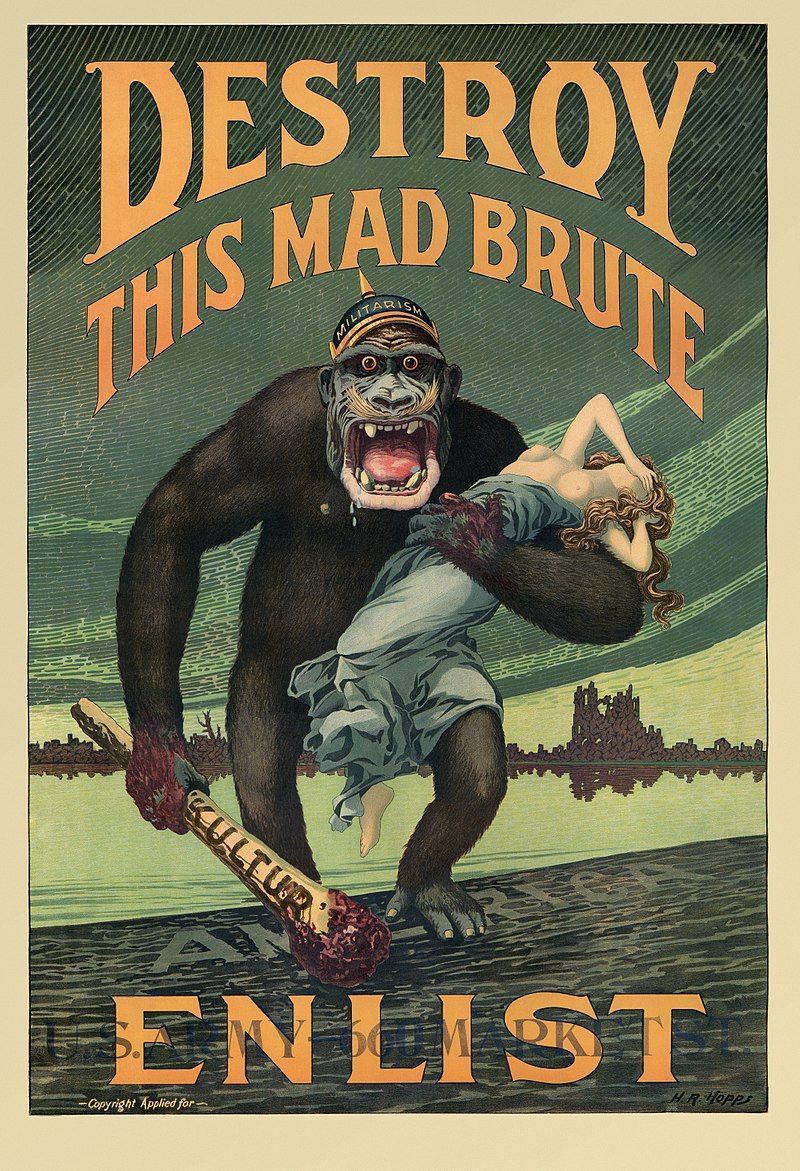

Sometimes the human mind’s ability to change is used unethically in a manipulative way, like in the Cambridge Analytica case.

A few years ago, Cambridge Analytica used data from Facebook to try to influence election outcomes by targeting specific individuals with tailored messages designed to change their views on particular issues:

It used various methods, such as Facebook group posts, ads, sharing articles to provoke or even creating fake Facebook pages like "I Love My Country" to provoke these users.

"When users joined CA's fake groups, it would post videos and articles that would further provoke and inflame them," Wylie wrote.

Is it viable to assume OpenAI will not monetise the possibility of influencing people and changing their minds?

By default, ChatGPT is instructed to avoid generating biased content based on race or gender:

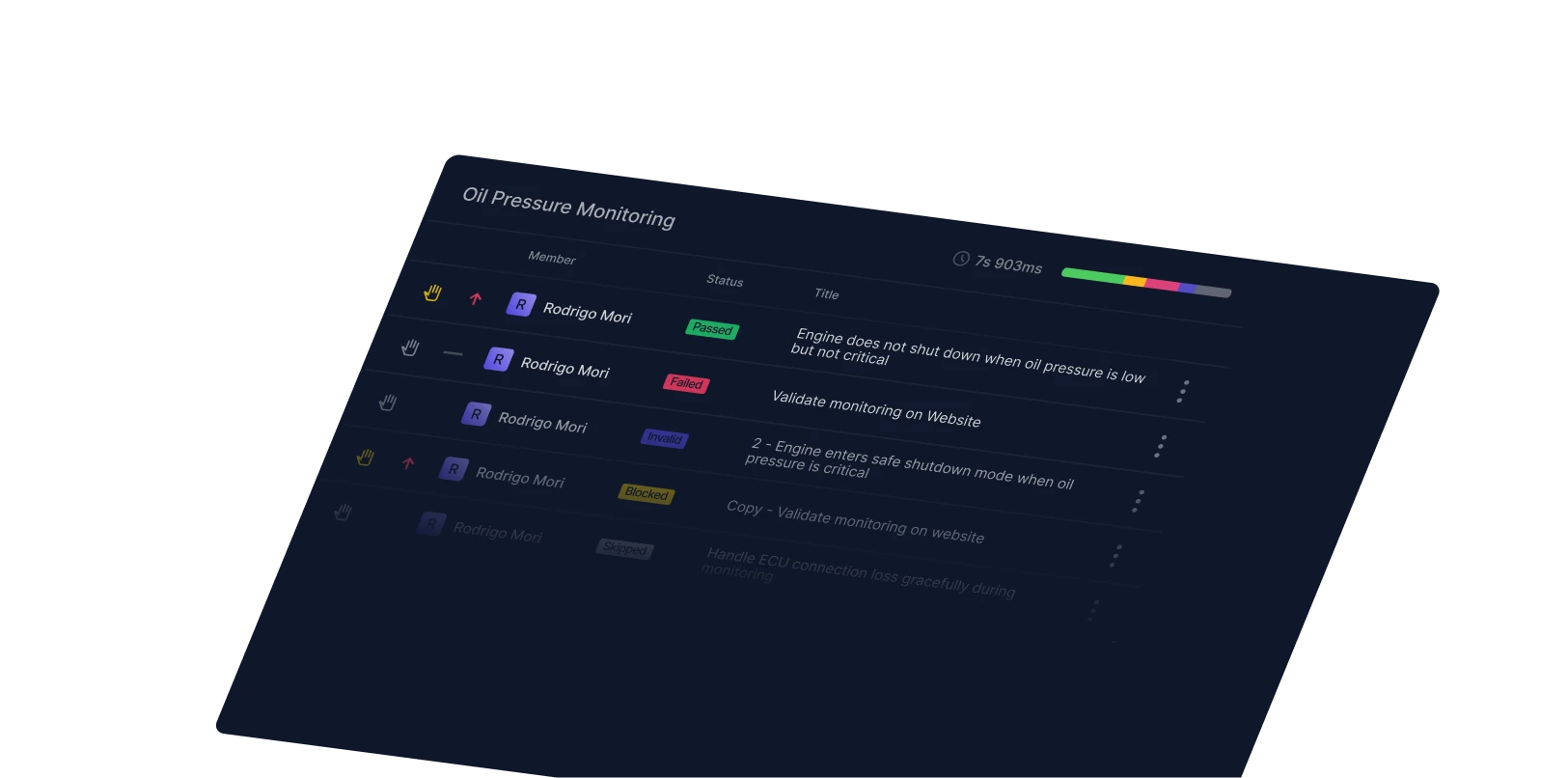

ChatGPT also has an excellent ability to alter text, even mimicking a style of a specific author:

Additionally, it’s easy to instruct ChatGPT to place emphasis on certain topics or ideas:

What if chatGPT monetisation would allow not only targeting users based on their requests and preferences; but also customizing the outputs for these users?

What if the outputs are slightly altered, the tone of voice changed, or specific information or examples of certain brands are added?

Will people ever be able to notice such minor changes?

Google and FB ads are visible, and Cambridge Analytica influence wasn't easy to spot, but chatGPT alteration will go completely unnoticed.

Could this be the actual reason why Google declared ‘code red’ emergency?

Is this the right time to think of the newly invented technology's adverse effects and tackle them before it is too late?

Update 14 March 2023:

The ways in which LLMs can be utilized for propaganda are being investigated by scientists at Stanford:

Their research says:

Our results show the current generation of large language models can persuade humans, even on polarized policy issues. This work raises important implications for regulating AI applications in political contexts, to counter its potential use in misinformation campaigns and other deceptive political activities.

References:

- How Minds Change: The Surprising Science of Belief, Opinion, and Persuasion

- Reframing: A therapeutic technique in group counseling

- Durably reducing transphobia: A field experiment on door-to-door canvassing

- How are memories formed?

- The specificities of the thinking process in adolescents. Clip thinking

- Dichotomy of the 'Clip Thinking' Phenomenon

- Features of clip thinking and attention among representatives of generations X and generations Z

- Why Are We Distracted by Social Media? Distraction Situations and Strategies, Reasons for Distraction, and Individual Differences

- Media Multitasking Effects on Cognitive vs. Attitudinal Outcomes: A Meta-Analysis

Related Posts

You might also like

Software Quality

Bug vs Defect: What’s the difference and why does it matter?

Maggie Marshall

Software Quality

From capital to village: A QA engineer's bug investigation

Alena Lutsik

Software Quality

Code review best practices for quality and collaboration

Maggie Marshall